This post was motivated by two recent tweets by Dr. Diego Kuonen, Principal of Statoo Consulting in Switzerland (who you should definitely follow if you don’t already – he’s one of the only other people in the world who thinks about data science and quality). First, he shared a slide show from CIO Insight with this clickbaity title, bound to capture the attention of any manager who cares about their bottom line (yeah, they’re unicorns):

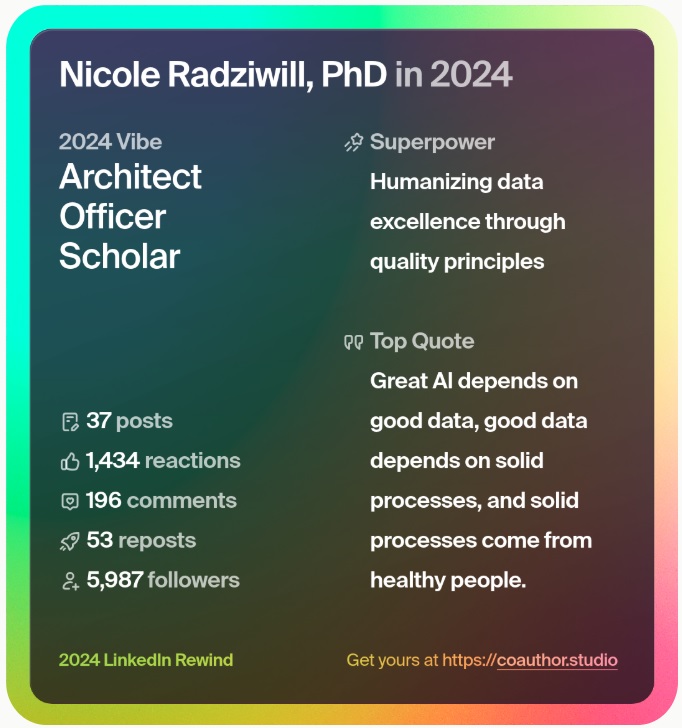

I’m so happy this message is starting to enter corporate consciousness, because I lived it throughout the decade of the 2000’s — working on data management for the National Radio Astronomy Observatory (NRAO). I published several papers during that time that present the following position on this theme (links to the full text articles are at the bottom of this post):

- First, storing data means you’ve saved it to physical media; archiving data implies that you are storing data over a longer (and possibly very long) time horizon.

- Even though storage is cheap, don’t store (or archive) everything. Inventories have holding costs, and data warehouses are no different (even though those electrons are so, so tiny).

- Archiving data that is of dubious quality is never advised. (It’s like piling your garage full of all those early drafts of every paper you’ve ever written… and having done this, I strongly recommend against it.)

- Sometimes it can be hard to tell whether the raw data we’re collecting is fundamentally good or bad — but we have to try.

- Data science provides fantastic techniques for learning what is meant by data quality, and then automating the classification process.

- The intent of whoever collects the data is bound to be different than whoever uses the data in the future.

- If we do not capture intent, we are significantly suppressing the potential that the data asset will have in the future.

Although I hadn’t seen this when I was deeply enmeshed in the problem long ago, it totally warmed my heart when Diego followed up with this quote from Deming in 1942:

In my opinion, the need for a dedicated focus on understanding what we mean by data quality (for our particular contexts) and then working to make sure we don’t load up our Big Data opportunities with Bad Data liabilities will be the difference between competitive and combustible in the future. Mind your data quality before your data science. It will also positively impact the sustainability of your data archive.

Papers where I talked about why NOT to archive all your data are here:

- Radziwill, N. M., 2006: Foundations for Quality Management of Scientific Data Products. Quality Management Journal, v13 Issue 2 (April), p. 7-21.

- Radziwill, N. M., 2006: Valuation, Policy and Software Strategy. SPIE, Orlando FL, May 25-31.

- Radziwill, N.M. and R. DuPlain, 2005: A Framework for Telescope Data Quality Management. Proc. SPIE, Madrid, Spain, October 2-5, 2005.

- DuPlain, R. F. and N.M. Radziwill, 2006: Autonomous Quality Assurance and Troubleshooting. SPIE, Orlando FL, May 25-31.

- DuPlain, R., Radziwill, N.M., & Shelton, A., 2007: A Rule-Based Data Quality Startup Using PyCLIPS. ADASS XVII, London UK, September 2007.

Leave a Reply