-

Continue reading →: A Magical 1997-era Web Form & the Illusion of Progress

Continue reading →: A Magical 1997-era Web Form & the Illusion of ProgressThis morning, while I was checking my email, the power went out. There was no storm, the neighbors still had power… did I forget to pay the bill? Surely not… I get an email every month & immediately click through to process the payment. But I decided to check anyway.…

-

Continue reading →: Agile Should Not Make You Feel Bad

Continue reading →: Agile Should Not Make You Feel BadAgility can be great (even though for many neurodivergent people, it’s the opposite of great). But when teams go through the motions to be “Agile” they often end up overcomplicating the work, adding stress to interactions, and achieving less agility. If your agile processes are “working”, then over the next…

-

Continue reading →: Cogramming (Or: Pair Programming for People Who “Don’t Like It”)

I saw a bunch of threads this morning on Twitter about pair programming, one of the core practices of XP and agile cornerstone. The arguments were diametrically opposed, either: it’s great, and the overhead is necessary and leads to long-term value; versus it’s terrible, because I need to get in…

-

Continue reading →: The Discovery Channel Test

Presentations can be boring. Talking about your work can be boring (to other people; you’ll probably be just fine). When you’re sitting in a talk or a session that you find boring (and you can’t figure out WIIFM – “what’s in it for me”)… you learn less. Although we shouldn’t…

-

Continue reading →: “Documentation” is a Dirty Word

Continue reading →: “Documentation” is a Dirty WordI just skimmed through another 20 page planning document, written in old-school “requirements specification”-ese with a hefty dose of ambiguity. You know, things like “the Project Manager shall…” and “the technical team will respond to bugs.” Sigh. There’s a lot of good content in there. It’s not easy to get…

-

Continue reading →: Scrum and the Illusion of Progress

Continue reading →: Scrum and the Illusion of ProgressAgile is everywhere. Sprints are everywhere. Freshly trained Scrum practitioners and established devotees in the guise of Scrum Masters beat the drum of backlog grooming sessions and planning poker and demos and retros. They are very busy, and provide the blissful illusion of progress. (Full disclosure: I am a Sprint…

-

Continue reading →: Why Software Estimation is (Often) Useless

For 12 years, I blogged and wrote a whole bunch. For the past year and a half, I’ve let myself be pulled away from so many of the things that make me me… including writing. Today I heard one of the best anecdotes ever, and it’s the spark that will…

Hello,

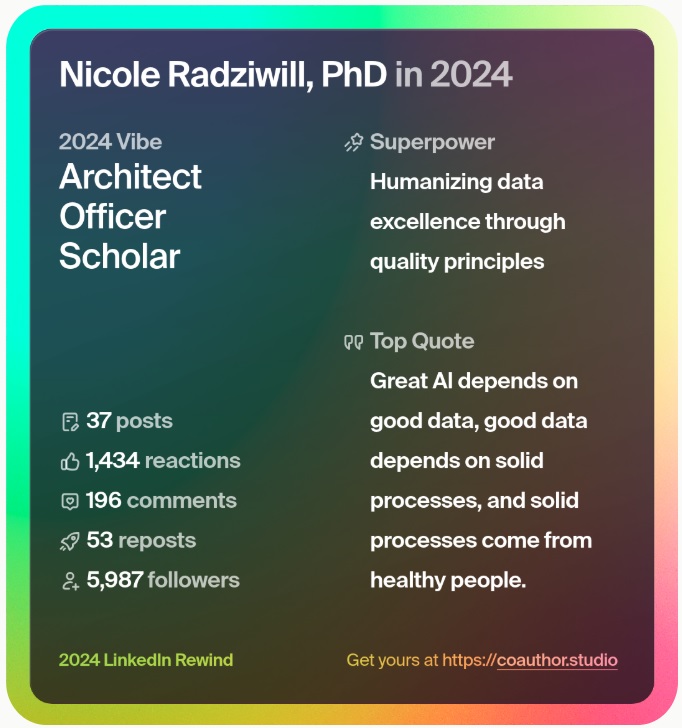

I’m Nicole

Since 2008, I’ve been sharing insights and expertise on Digital Transformation & Data Science for Performance Excellence here. As a CxO, I’ve helped orgs build empowered teams, robust programs, and elegant strategies bridging data, analytics, and artificial intelligence (AI)/machine learning (ML)… while building models in R and Python on the side. In 2025, I help leaders drive Quality-Driven Data & AI Strategies and navigate the complex market of data/AI vendors & professional services. Need help sifting through it all? Reach out to inquire – check out my new book that reveal the one thing EVERY organization has been neglecting – Data, Strategy, Culture & Power.